Speech recognition or how to make machines understand humans

#TechWatchbySeb — Weekly series of Tech sectors decrypted — Issue #27 — June 16th, 2021

Hello my friends 🖐,

and welcome back to the #TechWatchbySeb ☕️ - The weekly series of Tech sectors decrypted.

If you want to receive the weekly newsletter #TechWatchbySeb feel free to register, I do my best to only share qualitative content 🤓.

This week, I will share insights on a new area of Artificial Intelligence which is Speech Recognition while traveling to the U.K. 🇬🇧, France 🇫🇷 , and Ireland 🇮🇪.

This new article is part of a series where I decrypt the different clusters of Artificial Intelligence. In the past weeks, I covered:

This week we will have a deeper look at Speech and Voice recognition.

Speech and Voice recognition are mainly used ahead of Natural Language Processing technologies. While text/character recognition and speech/voice recognition allows computers to input the information, NLP allows them to make sense of this information.

The potential of this market is also quite impressive: Statistaforecasts that the global voice recognition market size will grow from $10.7 bn in 2019 to $27.16 bn by 2025. The estimated CAGR from 2020 to 2025 amounts to 16.8 percent.

Definition of Speech Recognition and examples of Applications

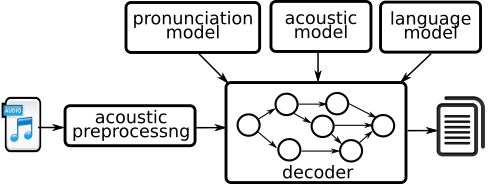

Speech recognition, also known as automatic speech recognition (ASR), computer speech recognition, or speech-to-text, is a capability that enables a program to process human speech into a written format. While it’s commonly confused with voice recognition, speech recognition focuses on the translation of speech from a verbal format to a text one whereas voice recognition just seeks to identify an individual user’s voice.

As explained by IBM, this technology can be customised by the organisation, depending on their business case by adapting the models around the following areas.

Language weighting: Improve precision by weighting specific words that are spoken frequently (such as product names or industry jargon);

Speaker labeling: Output a transcription that cites or tags each speaker’s contributions to a multi-participant conversation;

Acoustics training: Attend to the acoustical side of the business. Train the system to adapt to an acoustic environment (like the ambient noise in a call center) and speaker styles (like voice pitch, volume and pace);

Profanity filtering: Use filters to identify certain words or phrases and sanitize speech output.

Meanwhile, speech recognition continues to advance. Companies like IBM are making inroads in several areas to improve human-machine interactions.

There is a wide scope of potential applications for this technology, as described by IBM:

Security: Voice-based authentication adds a viable level of security.

Automotive: Speech recognizers improve driver safety by enabling voice-activated navigation systems and search capabilities in-car radios.

Healthcare: Doctors and nurses leverage dictation applications to capture and log patient diagnoses and treatment notes.

Technology: Virtual assistants are becoming integrated within our daily lives, particularly on our mobile devices. We use voice commands to access them through our smartphones, such as through Google Assistant or Apple’s Siri, for tasks, such as voice search, or through our speakers, via Amazon’s Alexa or Microsoft’s Cortana, to play music. They’ll only continue to integrate into the everyday products that we use, fueling the “Internet of Things” movement.

Speech and Voice recognition in the European Tech Ecosystem

Recent data shows that all the major tech giants in the world, especially the GAFA and BATX, are investing heavily in this field.

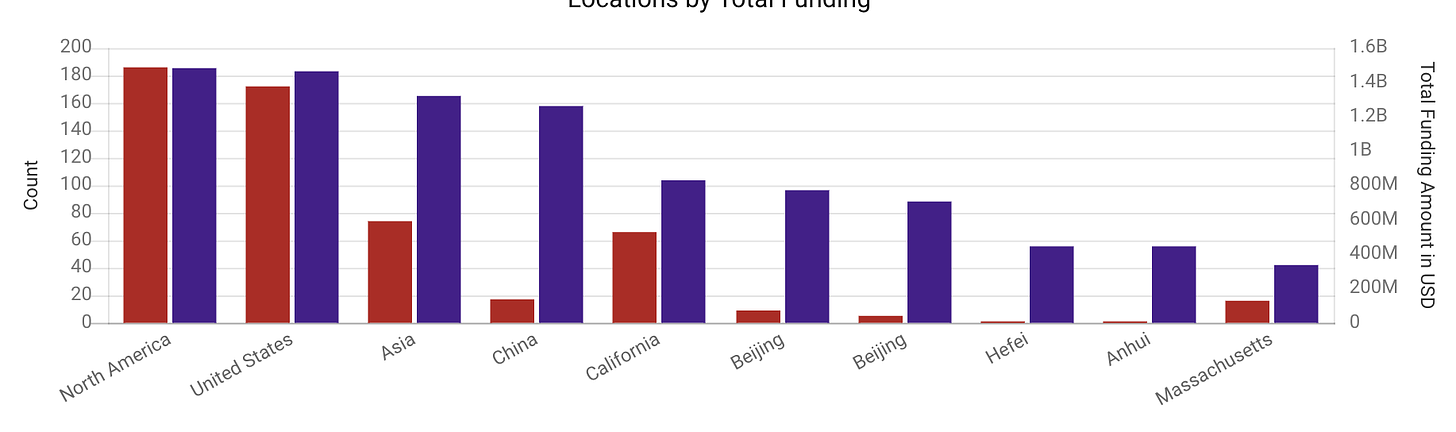

In the graph below, we can see the uptrend of Speech Recognition market, even if it remains limited in terms of figures with only 465 companies referenced on Crunchbase that have raised around $3Bn in total. In comparison, Computer Vision was around $9Bn in fundings and NLP around $6Bn.

However, the trend is pretty promising as in 2020 we were counting only $318M of fundings.

Now if we look at the geographical repartition, we can see a strong dominance of North American and Chinese companies. They represent around 98% of the funded companies in this tech segment. As with Natural Language Processing, it is quite alarming that Europe does not appear in those charts.

What are the most funded European companies?

Fluency Voice Technology 🇬🇧 provides on-premise and hosted packaged speech recognition solutions for call centers. The company has raised around $13M and has been acquired since then by Syntellect (US Based firm);

Allo-Media 🇫🇷 is a real-time phone call transcription platform for data activation. The company has raised a total of $12M.

SoapBox Labs 🇮🇪 privacy-first speech recognition software delivers voice-enabled experiences for kids of all ages, accents, and dialects. The company has raised a total of $11M.

As we can see and expect following the graph presented above, the size of European companies developing Speech Recognition technologies is limited compared to the American and Chinese leaders on the market, which have already raised hundreds of millions.

What are the most active funds in Speech Recognition in Europe?

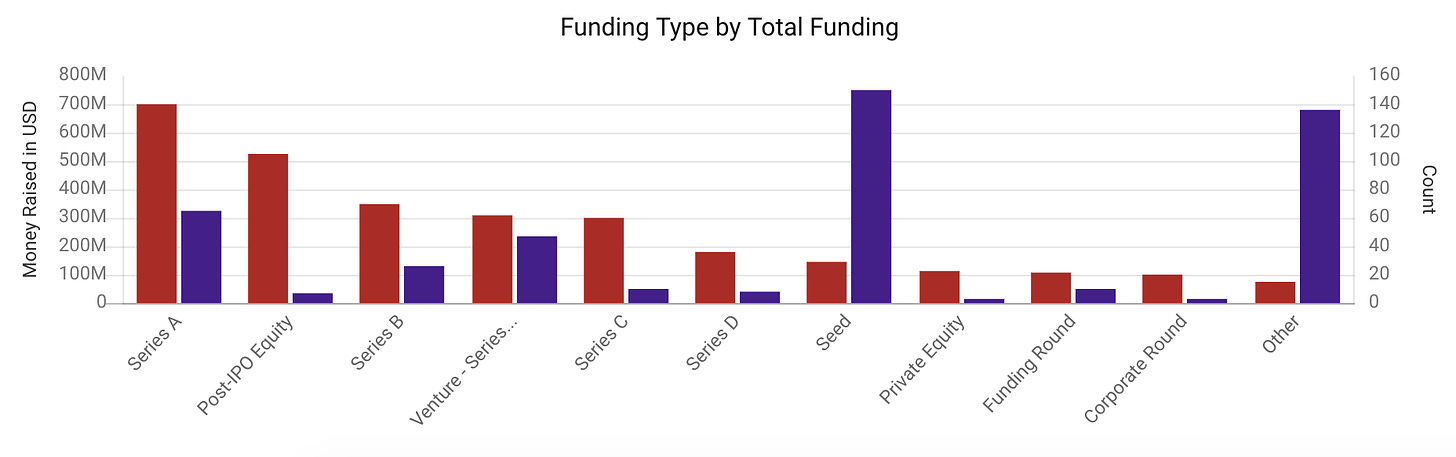

We can see that there is already a good level of maturity in the market. As presented in the graph below there have been many funding rounds at various stages, including Series B, C and D.

Regarding the European investors, we can see only one public fund which has invested in several deals, while other investors have participated in only one deal:

EASME 🇪🇺is the European Union executive agency for SMEs in charge of the Enterprise Europe Network, COSME, and other programs. The fund has backed 6 Speech Recognition deals.

Other investors involved in Speech Recognition deals: Kima Ventures, Enterprise Ireland (public), BPI France (public), Eight Road Ventures…

Conclusion

In the continuity of the digital revolution we are living, more use cases will likely be developed to improve the way machines understand human action — not only by touching or clicking on screens but also by speaking with machines as we do between humans. In that perspective, Speech Processing is a key technology that will help machines better interact with humans and is the first step before leveraging other AI technologies such as NLP. That’s why we can easily understand the strong position of the GAFA, or the BATX which contributes to developing the local ecosystems of Speech Recognition technologies. Sadly, Europe is lagging behind in this tech race and since the most successful European tech companies are being acquired by American firms, the old continent is likely to stay behind…

That’s it for this week, wishing you a great week ahead🖐

Stay safe ❤️